Our commitment to responsible AI

HoopAI is committed to developing AI features that empower businesses while protecting the people who interact with them. Every AI capability we build, from Conversation AI to Voice AI to Content AI, is designed with safety, privacy, and transparency as foundational requirements, not afterthoughts. We continuously evaluate our AI systems against evolving industry standards, regulatory requirements, and ethical frameworks. Our commitment extends beyond compliance. We aim to set a high bar for responsible AI use in the business automation space.

Core principles

HoopAI’s approach to AI is guided by five core principles. These principles inform every decision we make about AI features, from initial design through deployment and ongoing monitoring.Transparency

Users and their customers deserve to know when they are interacting with AI. HoopAI is committed to making AI interactions clear and understandable.- Disclosure: We provide tools and guidance to help you inform your customers when they are communicating with an AI agent rather than a human.

- Explainability: AI decisions and responses should be understandable. When an AI agent takes an action, such as booking an appointment or qualifying a lead, the reasoning should be traceable.

- Honest capabilities: We are upfront about what our AI can and cannot do. See our limitations page for a candid assessment.

Safety

AI systems must not cause harm. HoopAI builds multiple layers of safety into every AI feature.- Content filtering: Built-in safeguards prevent AI agents from generating harmful, offensive, or inappropriate content.

- Guardrails: Configurable guardrails let you control the boundaries of AI behavior for your specific use case.

- Escalation paths: AI agents are designed to recognize when a situation requires human intervention and can seamlessly hand off conversations.

- Testing and validation: Every AI feature undergoes rigorous testing before release, including adversarial testing for edge cases.

Privacy

Protecting personal data is non-negotiable. HoopAI’s AI features are designed with privacy by design principles.- Data minimization: AI agents only access the data they need to perform their function.

- No third-party training: Your data and your customers’ data are never used to train third-party AI models. Read our full data privacy policy.

- Retention controls: You have control over how long AI conversation data is retained.

- Encryption: All AI data is encrypted in transit and at rest.

Fairness

AI systems must treat all users equitably, regardless of their background, identity, or characteristics.- Bias monitoring: We actively monitor our AI systems for signs of bias in responses and recommendations.

- Inclusive design: AI features are designed to work effectively across diverse populations, languages, and communication styles.

- Regular audits: We conduct periodic reviews of AI outputs to identify and address any fairness concerns.

- Feedback loops: User reports of unfair or biased AI behavior are prioritized and investigated promptly.

Human oversight

AI should augment human decision-making, not replace it. HoopAI ensures humans remain in control.- Human-in-the-loop: Critical decisions always involve human review. AI agents can recommend, but humans approve.

- Override capabilities: You can always override, pause, or shut down AI agents instantly.

- Monitoring dashboards: The Conversation AI dashboard gives you full visibility into what your AI agents are doing.

- Approval workflows: For sensitive industries, you can configure approval workflows that require human sign-off before AI actions are executed.

How AI features are designed with safety in mind

Every AI feature at HoopAI goes through a structured development process that prioritizes safety at each stage.Design phase

Before any code is written, our team evaluates the potential risks and impacts of a new AI feature. This includes identifying possible misuse scenarios, defining acceptable behavior boundaries, and establishing success criteria that include safety metrics alongside performance metrics.Development phase

During development, safety guardrails are built into the architecture from the start. This includes input validation, output filtering, rate limiting, and error handling. Our engineering team follows secure development practices as outlined in our security best practices.Testing phase

AI features undergo multiple rounds of testing, including:- Functional testing to verify the feature works as intended

- Adversarial testing to identify vulnerabilities to misuse or manipulation

- Bias testing to check for unfair treatment of different user groups

- Edge case testing to evaluate behavior in unusual or unexpected scenarios

- Load testing to ensure safety mechanisms remain effective under high traffic

Deployment and monitoring

After launch, AI features are continuously monitored for performance and safety. We track metrics such as response accuracy, user satisfaction, escalation rates, and safety incident reports. Any issues identified trigger an immediate review and remediation process.User responsibilities when deploying AI agents

While HoopAI provides the tools and safeguards for responsible AI use, you play a critical role in ensuring your AI deployments are ethical and effective. Here are your key responsibilities.Understand your AI agents

Before deploying any AI agent, take the time to understand how it works, what data it accesses, and what actions it can take. Review your bot settings carefully and test your agent thoroughly before making it live.Configure appropriate guardrails

Use the guardrails tools available to you. Set clear topic boundaries, define what information the AI should and should not share, and establish escalation triggers for sensitive situations.Train your AI properly

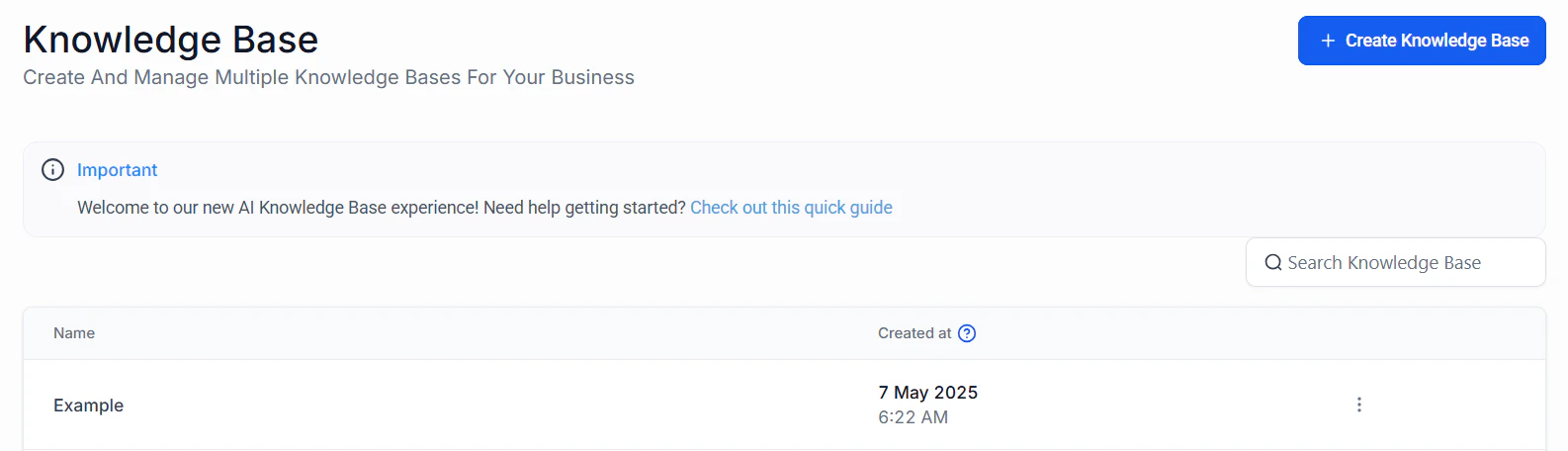

The quality of your AI agent’s responses depends heavily on the training data and instructions you provide. Use your knowledge base to give your agent accurate, up-to-date information. Follow prompt engineering best practices to guide your agent’s behavior effectively.Monitor and review

Regularly review your AI agent’s conversations and performance metrics on the Conversation AI dashboard. Look for:- Responses that are inaccurate or misleading

- Conversations where the AI failed to escalate appropriately

- Patterns of user frustration or confusion

- Any instances of inappropriate or harmful content

Stay current with regulations

AI regulations are evolving rapidly. Stay informed about the compliance requirements that apply to your industry and jurisdiction. HoopAI provides guidance, but you are ultimately responsible for ensuring your AI deployments comply with applicable laws.Disclosure best practices

One of the most important aspects of responsible AI use is transparency with the people who interact with your AI agents. Here are best practices for disclosure.Why disclosure matters

Informing users that they are communicating with AI is not just an ethical obligation. In many jurisdictions, it is a legal requirement. Disclosure builds trust, sets appropriate expectations, and helps prevent misunderstandings.When to disclose

You should disclose AI involvement in the following situations:- Chat interactions: When a customer initiates a conversation with an AI-powered chatbot

- Voice calls: When an AI agent handles inbound or outbound phone calls

- Email and SMS: When AI generates or sends automated messages

- Content generation: When AI creates content that is presented as coming from your brand

- Decision support: When AI influences decisions that affect customers, such as lead scoring or appointment prioritization

How to disclose effectively

Chat and messaging disclosure

Chat and messaging disclosure

Include a clear statement at the beginning of AI-powered conversations. For example:Avoid misleading names or personas that could make users believe they are talking to a human.

Voice AI disclosure

Voice AI disclosure

For voice interactions, begin the call with a brief, natural-sounding disclosure:Many jurisdictions require explicit disclosure at the start of AI-initiated calls. Check your local regulations and review our compliance guide.

Email and SMS disclosure

Email and SMS disclosure

Include a note in automated messages indicating they were generated or sent with AI assistance. This can be subtle but should be clear:

Website and form disclosure

Website and form disclosure

If AI powers features on your website such as chatbots, recommendation engines, or dynamic content, include disclosure in your privacy policy and, where appropriate, near the AI-powered feature itself.

Disclosure templates

AI ethics considerations for businesses

Deploying AI in a business context raises important ethical questions. Here is guidance for navigating them responsibly.Customer trust and expectations

Your customers trust you with their data and their time. When you deploy AI, you are extending that trust relationship to an automated system. Consider:- Will your customers feel comfortable interacting with AI?

- Are you using AI to genuinely improve the customer experience, or primarily to cut costs?

- How will you handle situations where AI makes a mistake that affects a customer?

Employee impact

AI automation can change the nature of work for your team. Consider the impact on employees and plan accordingly:- AI should handle repetitive tasks so your team can focus on high-value, human-centric work

- Provide training so your team understands how to work alongside AI tools effectively

- Be transparent with your team about how AI is being used and its limitations

Industry-specific considerations

Different industries have different ethical considerations when it comes to AI.- Healthcare

- Financial services

- Legal

- Real estate

AI should never replace professional medical judgment. Use AI for administrative tasks like scheduling and intake, but ensure that any health-related interactions include clear disclaimers and easy access to qualified professionals. Review our HIPAA compliance guidance.

Continuous improvement

Responsible AI use is not a one-time effort. It requires ongoing attention.- Regularly review and update your AI agents’ training data and instructions

- Stay informed about new AI capabilities and safety features as HoopAI releases them

- Participate in feedback programs to help us improve AI safety and performance

- Share learnings with your team and industry peers

Trust and safety resources

This section of the documentation provides comprehensive guidance on every aspect of AI trust and safety at HoopAI. Explore the pages below for detailed information on specific topics.Data privacy and AI

How HoopAI handles data in AI features, including processing, retention, consent, and GDPR compliance.

AI guardrails and content safety

Configure safety guardrails for your AI agents to prevent harmful, off-topic, or inappropriate responses.

AI compliance

Compliance requirements for AI features including GDPR, HIPAA, TCPA, and industry-specific regulations.

AI limitations and transparency

Understand what HoopAI’s AI can and cannot do, with guidance on human oversight.

Security best practices for AI

Secure your AI deployments with best practices for access control, prompt injection prevention, and monitoring.

Next steps

Configure guardrails

Set up safety boundaries for your AI agents before going live.

Review compliance requirements

Ensure your AI deployment meets regulatory requirements for your industry.

Understand AI limitations

Set realistic expectations by understanding what AI can and cannot do.

Secure your AI deployment

Follow security best practices before launching customer-facing AI agents.