Models behind HoopAI features

HoopAI does not expose raw model names in the interface. Instead, the platform abstracts model selection into feature-level capabilities. Under the hood, every AI-powered feature is mapped to a specific model (or combination of models) that has been tested and validated for that use case.Why HoopAI manages model selection

- Reliability — Each feature is matched to a model that consistently meets quality thresholds for that task.

- Cost efficiency — Simpler tasks use lighter models to keep your usage costs low, while complex tasks use more capable models.

- Seamless upgrades — When a better model becomes available, HoopAI can switch transparently without breaking your workflows.

Capabilities matrix

The table below maps each major AI feature to the model tier that powers it, along with key characteristics.| Feature | Model tier | Primary provider | Input types | Typical response time | Context window |

|---|---|---|---|---|---|

| Conversation AI | Advanced LLM | OpenAI / Anthropic | Text, images | 1 — 4 seconds | Up to 128K tokens |

| Voice AI | Speech-optimized LLM + TTS/STT | OpenAI / Anthropic | Audio (real-time) | Sub-second streaming | Up to 32K tokens |

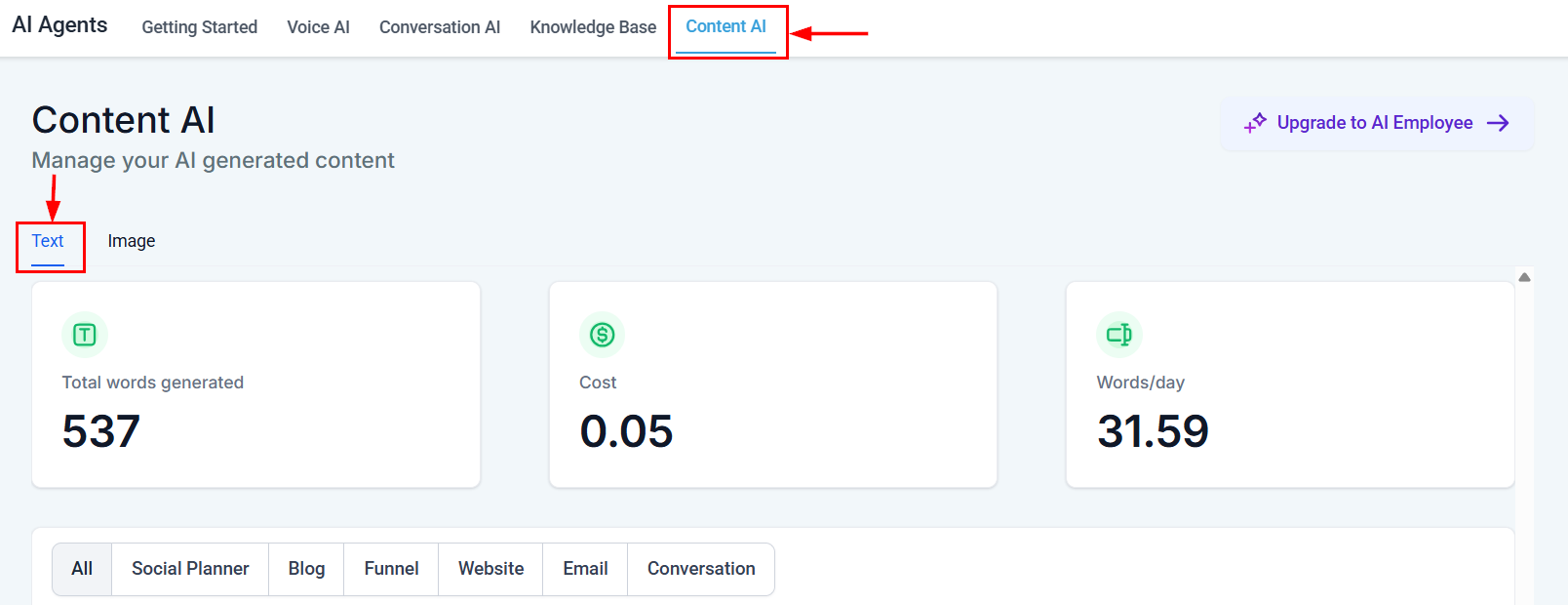

| Content AI | Advanced LLM | OpenAI | Text, images | 2 — 8 seconds | Up to 128K tokens |

| Reviews AI | Mid-tier LLM | OpenAI | Text | 1 — 3 seconds | Up to 16K tokens |

| Workflow AI | Advanced LLM | OpenAI / Anthropic | Text, structured data | 1 — 5 seconds | Up to 128K tokens |

Model tiers and providers may change as HoopAI continuously evaluates the best options. The platform always selects models that meet or exceed the performance benchmarks for each feature.

Model tier definitions

- Advanced LLM — The most capable general-purpose language models, used for tasks that require nuanced understanding, long context, or complex reasoning.

- Mid-tier LLM — Highly capable models optimized for speed and cost when the task is well-scoped (for example, generating a review response from a template).

- Speech-optimized LLM + TTS/STT — A combination of a language model for dialogue management, a speech-to-text (STT) engine for transcription, and a text-to-speech (TTS) engine for voice synthesis.

How HoopAI selects and evaluates models

HoopAI’s AI engineering team follows a structured evaluation process whenever a new model is released or an existing model is updated.Benchmark testing

Every candidate model is tested against a curated set of benchmarks specific to each feature — appointment booking accuracy for Voice AI, factual grounding for Conversation AI, tone consistency for Content AI, and so on.

Quality scoring

Results are scored across five dimensions: accuracy, latency, cost per request, safety, and consistency. A model must meet minimum thresholds in all five categories to be considered.

Shadow deployment

Qualifying models run in shadow mode alongside the current production model. Real (anonymized) traffic is processed by both, and outputs are compared without affecting end users.

Gradual rollout

Once a model demonstrates equal or better performance, it is rolled out incrementally — starting with a small percentage of traffic and scaling to full deployment over several days.

Model update schedule and versioning

HoopAI evaluates new model versions on an ongoing basis. Major updates typically follow this cadence:| Update type | Frequency | User impact |

|---|---|---|

| Minor model patches | Monthly or as released by providers | No user action required; improvements are automatic |

| Major model upgrades | Quarterly evaluation cycle | May include new capabilities; announced in release notes |

| Provider additions | As new providers meet quality bar | Expands the pool of models HoopAI can route to |

You do not need to take any action when models are updated. HoopAI handles versioning and migration automatically. If a model change affects prompt behavior, the platform will notify you through the dashboard.

Performance characteristics

Response time ranges

Response times depend on the feature, the length of input, and the complexity of the task. The ranges below represent the 50th to 95th percentile under normal operating conditions.| Feature | p50 latency | p95 latency |

|---|---|---|

| Conversation AI (text) | 1.2 seconds | 3.5 seconds |

| Voice AI (turn-by-turn) | 0.4 seconds | 1.2 seconds |

| Content AI (short-form) | 1.5 seconds | 4.0 seconds |

| Content AI (long-form) | 4.0 seconds | 12.0 seconds |

| Reviews AI | 0.8 seconds | 2.5 seconds |

| Workflow AI (GPT action) | 1.0 seconds | 4.5 seconds |

Accuracy and quality

Accuracy metrics vary by feature:- Conversation AI — Measured by intent recognition accuracy (target above 92%) and factual grounding against knowledge base sources.

- Voice AI — Measured by speech recognition word-error rate (target below 8%) and task-completion rate (target above 85%).

- Content AI — Measured by brand-voice consistency scores and human quality ratings during internal evaluations.

- Reviews AI — Measured by sentiment-match accuracy and response appropriateness ratings.

- Workflow AI — Measured by structured output validity (JSON schema compliance) and action-execution success rates.

Limitations

Every AI model has inherent limitations. Understanding these helps you set appropriate expectations and design better prompts. For detailed prompt-writing strategies, see Prompt engineering 101.Text model limitations

- Hallucination — Models can generate plausible-sounding but incorrect information. Always provide a knowledge base so the model has grounded facts to reference.

- Context window limits — Very long conversations may exceed the model’s context window, causing earlier messages to be summarized or dropped. Keep prompts concise and use guardrails to prevent unbounded conversations.

- Recency — Models have a training data cutoff date and may not know about very recent events unless information is provided through your knowledge base or custom actions.

- Sensitive topics — Models include built-in safety filters. In rare cases, these filters may decline to respond to legitimate business queries. Adjusting your prompt wording usually resolves this.

Voice model limitations

- Background noise — Speech recognition accuracy decreases in noisy environments. Callers on speakerphone or in public spaces may experience lower transcription quality.

- Accents and dialects — While modern STT engines handle a wide range of accents, recognition accuracy can vary. Testing with your customer demographic is recommended.

- Barge-in handling — If a caller speaks while the agent is talking, the model may occasionally misinterpret the interruption. See Voice AI agent creation for barge-in configuration options.

- Latency sensitivity — Voice conversations are real-time, so even small latency increases are noticeable. HoopAI optimizes for sub-second response times but network conditions can add delay.

Image and content limitations

- Image generation style — AI-generated images may not perfectly match your brand style guide without detailed prompting.

- Content length — Extremely long content pieces (over 3,000 words) may lose coherence toward the end. Breaking content into sections and generating each separately produces better results.

- Factual claims in content — Always review AI-generated content for factual accuracy before publishing, especially for regulated industries.

Future model improvements and roadmap

HoopAI’s AI capabilities are evolving rapidly. Here is a look at what is on the horizon:Multi-modal Conversation AI

Multi-modal Conversation AI

Support for image and document analysis within chat conversations, allowing customers to share photos or PDFs and receive AI-powered responses.

Enhanced voice customization

Enhanced voice customization

Expanded voice cloning and customization options, including the ability to fine-tune speaking style, pacing, and emotional tone for Voice AI agents.

Smarter model routing

Smarter model routing

More granular per-task model routing that considers the specific sub-task within a feature — for example, using a reasoning-optimized model for complex customer questions and a speed-optimized model for simple FAQs.

Improved multilingual support

Improved multilingual support

Broader language coverage with improved accuracy for non-English conversations, including real-time translation capabilities for Voice AI.

Custom model fine-tuning

Custom model fine-tuning

The ability to fine-tune models on your specific business data for even higher accuracy and brand consistency, available for enterprise plans.

Next steps

AI features at a glance

See every AI feature in one comparison table.

AI pricing and usage

Understand how AI usage is billed and how to optimize costs.

Prompt engineering 101

Write better prompts for more accurate AI responses.

AI glossary

Look up AI terms used throughout HoopAI.