Access controls

- Limit AI agent access to only the team members who need it

- Use role-based permissions to control who can create, edit, or delete AI agents

- Review API tokens regularly and revoke any that are no longer needed

- Separate test and production environments when developing AI configurations

Prompt injection prevention

Prompt injection is when a user attempts to manipulate an AI agent by including hidden instructions in their messages. To mitigate this:- Include explicit instructions in your system prompt to ignore attempts to override behavior

- Use the guardrails feature to set boundaries on what your AI can discuss

- Monitor conversation logs for unusual patterns

- Test your agents with adversarial inputs before deploying

Monitoring and auditing

- Review conversation logs regularly to identify unexpected behavior

- Set up alerts for high-volume or unusual AI activity

- Track AI credit usage to detect anomalies

- Audit your knowledge base periodically for accuracy and appropriateness

Data security

- Do not include passwords, API keys, or secrets in system prompts

- Avoid storing sensitive customer data (SSN, credit card numbers) in knowledge bases

- Review the Data privacy guide for details on how HoopAI processes AI data

Next steps

Guardrails

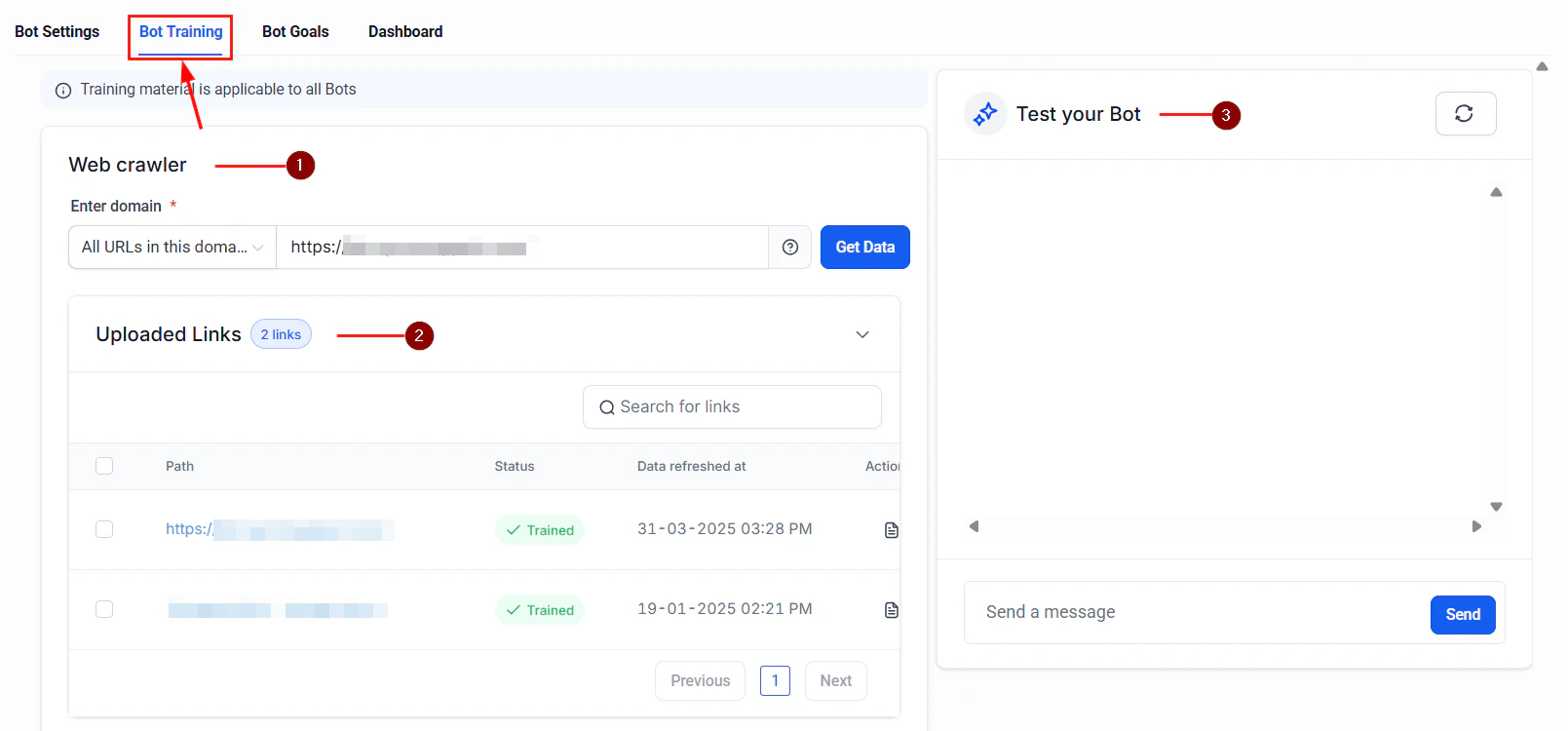

Set behavioral boundaries for your AI agents

AI safety overview

Our principles for responsible AI